Click here for registration and download

Welcome to the DENSE datasets

The EU-project DENSE seeks to eliminate one of the most pressing problems of automated driving: the inability of current systems to sense their surroundings under all weather conditions. Severe weather – such as snow, heavy rain or fog – have long been viewed as one of the last remaining technical challenges preventing self-driving cars from being brought to the market. This can only be overcome by developing a fully reliable environment perception technology.

This homepage provides a variety of projects and datasets that have been developed during the project.

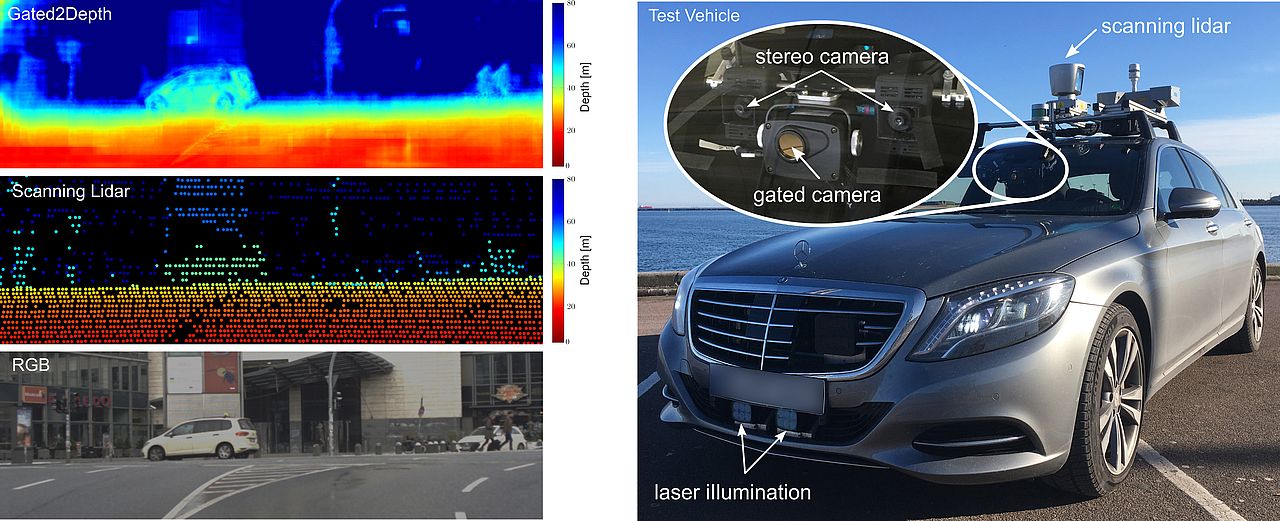

- Gated2Depth: Imaging framework which converts three images from a gated camera into high-resolution depth maps with depth accuracy comparable to pulsed lidar measurements.

- Seeing Through Fog: Novel multi-modal datatset with 12,000 samples under different weather and illumination conditions and 1,500 measurements acquired in a fog chamber. Furthermore, the dataset contains accurate human annotations for objects annotated with 2D and 3D bounding boxes and frame tags describing the weather, daytime and street condition.

- Gated2Gated: Self-supervised imaging framework, which estimates absolute depth maps from three gated images and uses cycle and temporal consistency as training signals. This work provides an extension of the Seeing Through Fog dataset with synchronized temporal history frames for each keyframe. Each keyframe contains synchronized lidar data and fine granulated scene and object annotations.

- Pixel Accurate Depth Benchmark: Evaluation benchmark for depth estimation and completion under varying weather conditions using high-resolution depth.

- Image Enhancement Benchmark: A benchmark for image enhancement methods.

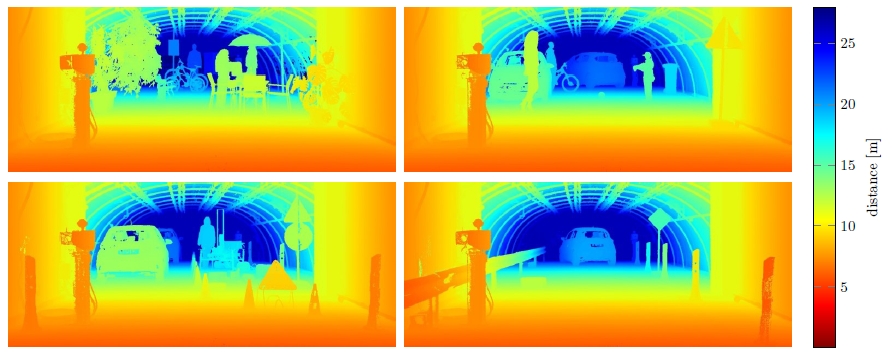

We present an imaging framework which converts three images from a low-cost CMOS gated camera into high-resolution depth maps with depth accuracy comparable to pulsed lidar measurements. The main contributions are:

- We propose a learning-based approach for estimating dense depth from gated images, without the need for dense depth labels for training.

- We validate the proposed method in simulation and on real-world measurements acquired with a prototype system in challenging automotive scenarios. We show that the method recovers dense depth up to 80m with depth accuracy comparable to scanning lidar.

- Dataset: We provide the first long-range gated dataset, covering over 4,000km driving throughout Northern Europe.

How to use the Dataset

We provide documented tools for handling our provided dataset in Python. In addition, the model is given in Tensorflow.

Click here!

Reference

If you find our work on gated depth estimation useful in your research, please consider citing our paper:

@inproceedings{gated2depth2019,

title = {Gated2Depth: Real-Time Dense Lidar From Gated Images},

author = {Gruber, Tobias and Julca-Aguilar, Frank and Bijelic, Mario and Heide, Felix},

booktitle = {The IEEE International Conference on Computer Vision (ICCV)},

year = {2019}

}

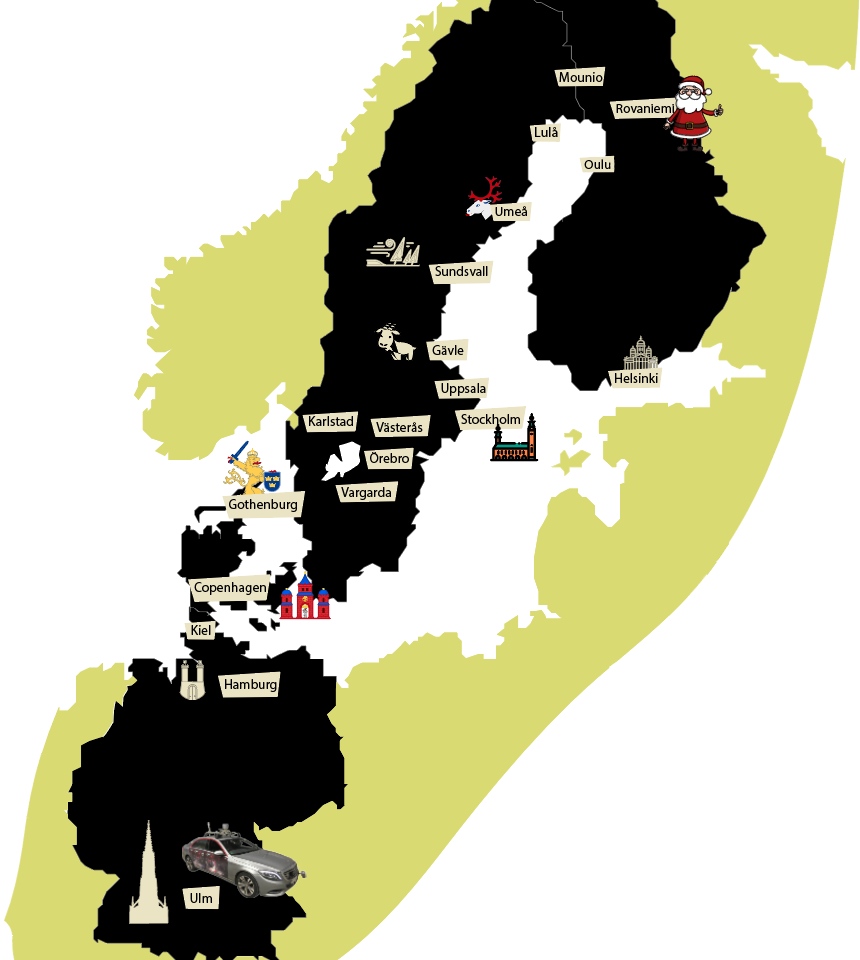

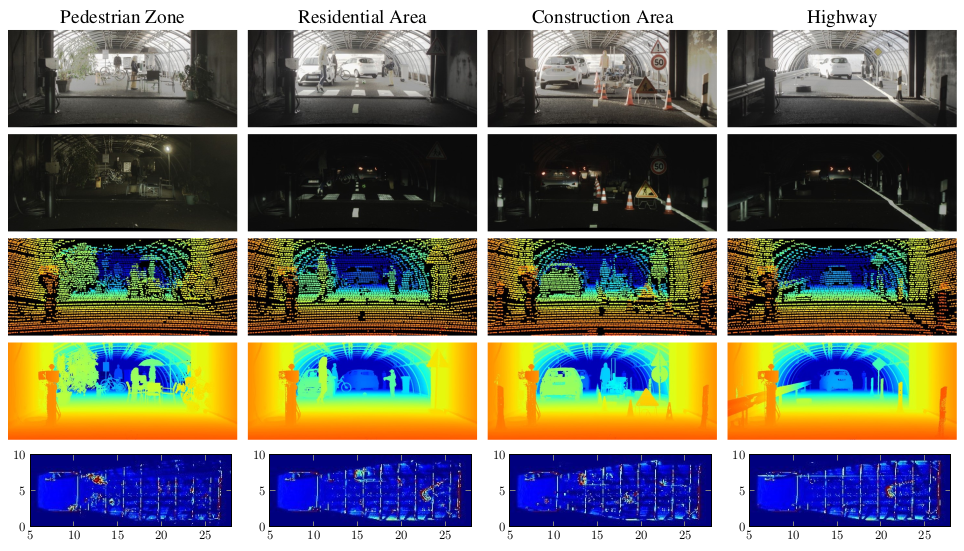

We introduce an object detection dataset in challenging adverse weather conditions covering 12000 samples in real-world driving scenes and 1500 samples in controlled weather conditions within a fog chamber. The dataset includes different weather conditions like fog, snow, and rain and was acquired by over 10,000 km of driving in northern Europe. The driven route with cities along the road is shown on the right. In total, 100k Objekts were labeled with accurate 2D and 3D bounding boxes. The main contributions of this dataset are:

- We provide a proving ground for a broad range of algorithms covering signal enhancement, domain adaptation, object detection, or multi-modal sensor fusion, focusing on the learning of robust redundancies between sensors, especially if they fail asymmetrically in different weather conditions.

- The dataset was created with the initial intention to showcase methods, which learn of robust redundancies between the sensor and enable a raw data sensor fusion in case of asymmetric sensor failure induced through adverse weather effects.

- In our case we departed from proposal level fusion and applied an adaptive fusion driven by measurement entropy enabling the detection also in case of unknown adverse weather effects. This method outperforms other reference fusion methods, which even drop in below single image methods.

- Please check out our paper for more information. Click here for our paper at CVF.

Videos

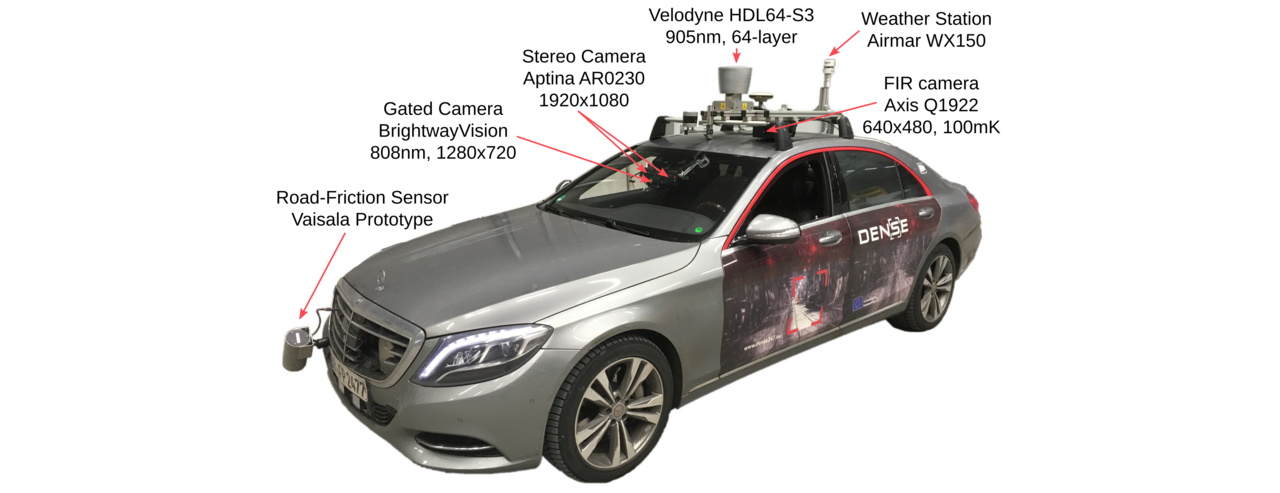

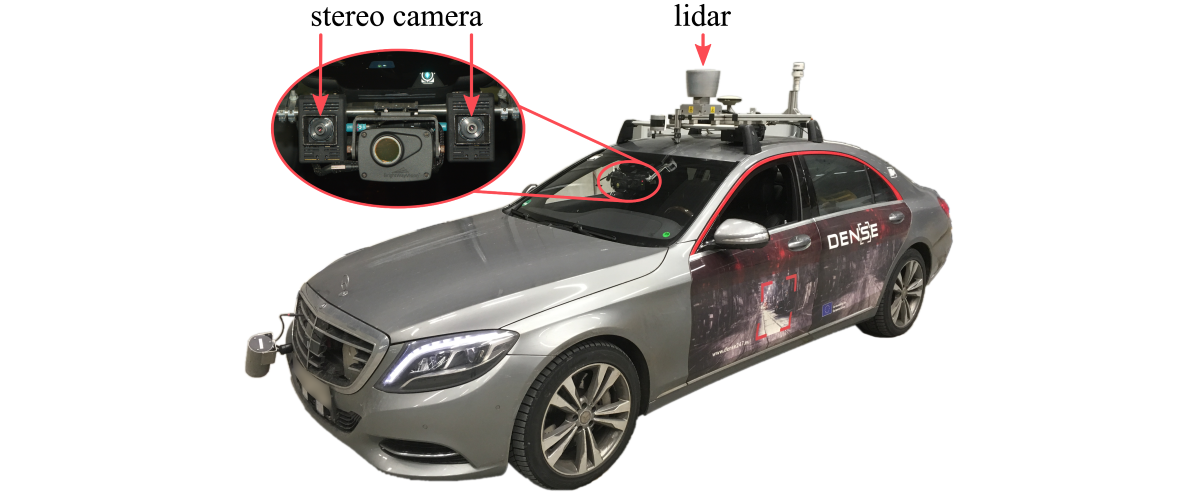

Sensor Setup

To capture the dataset, we have equipped a test vehicle with sensors covering the visible, mm-wave, NIR, and FIR band, see Figure below. We measure intensity, depth, and weather conditions. For more information, please refer to our dataset paper.

How to use the Dataset

We provide documented tools for handling our provided dataset in Python.

Click here!

Click here!

Reference

If you find our work on object detection in adverse weather useful in your research, please consider citing our paper:

@InProceedings{Bijelic_2020_CVPR,

author = {Bijelic, Mario and Gruber, Tobias and Mannan, Fahim and Kraus, Florian and Ritter, Werner and Dietmayer, Klaus and Heide, Felix},

title = {Seeing Through Fog Without Seeing Fog: Deep Multimodal Sensor Fusion in Unseen Adverse Weather},

booktitle = {The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2020}

}

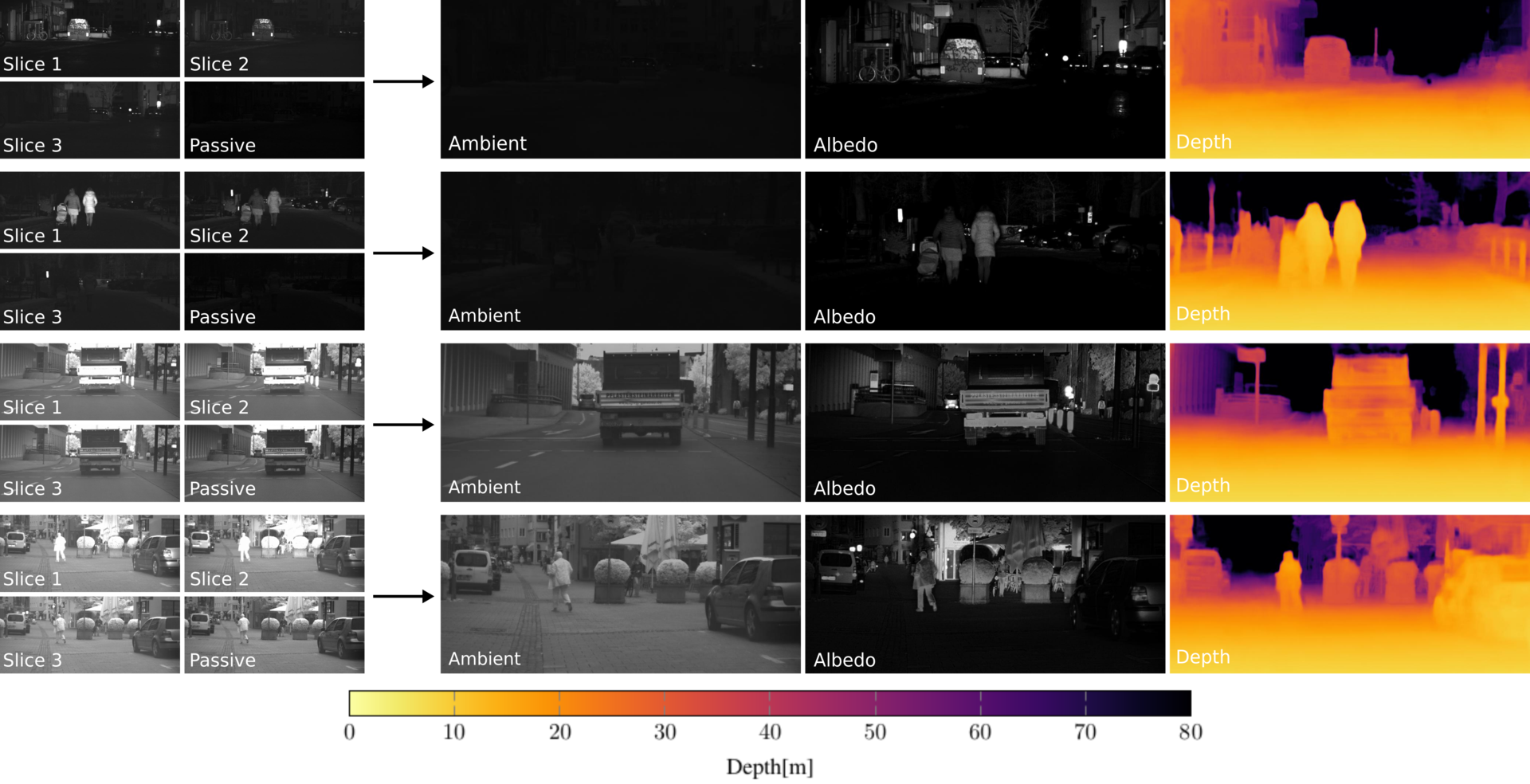

We present a self-supervised imaging framework, which estimates dense depth from a set of three gated images without any ground truth depth for supervision. By predicting scene albedo, depth and ambient illumination, we reconstruct the input gated images and enforce cycle consistency as can be seen in the title picture. In addition, we use view synthesis to introduce temporal consistency between adjacent gated images.

The main contributions are:

- We propose the first self-supervised method for depth estimation from gated images that learns depth prediction without ground truth depth.

- We validate that the proposed method outperforms previous state of the art including fully supervised methods in challenging weather conditions as dense fog or snowfall where lidar data is too cluttered to be used for direct supervision.

- Dataset: We provide the first temporal gated imaging dataset, consisting of around 130,000 samples, captured in clear and adverse weather conditions as an extension of the labeled Seeing Through Fog dataset which provides detailed scene annotations, object labels and corresponding multi modal data.

Videos

How to use the Dataset

We provide documented tools for handling our provided dataset in Python. In addition, the proposed model is implemented in PyTroch.

Click here!

Reference

If you find our work on self-supervised gated depth estimation useful in your research, please consider citing our two papers:

@article{gated2gated,

title={Gated2Gated: Self-Supervised Depth Estimation from Gated Images},

author={Walia, Amanpreet and Walz, Stefanie and Bijelic, Mario and Mannan, Fahim and Julca-Aguilar, Frank and Langer, Michael and Ritter, Werner and Heide, Felix},

journal={arXiv preprint arXiv:2112.02416},

year={2021}

}

and

@InProceedings{Bijelic_2020_CVPR,

author = {Bijelic, Mario and Gruber, Tobias and Mannan, Fahim and Kraus, Florian and Ritter, Werner and Dietmayer, Klaus and Heide, Felix},

title = {Seeing Through Fog Without Seeing Fog: Deep Multimodal Sensor Fusion in Unseen Adverse Weather},

booktitle = {The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2020}

}

We introduce an evaluation benchmark for depth estimation and completion using high-resolution depth measurements with angular resolution of up to 25” (arcsecond), akin to a 50 megapixel camera with per-pixel depth available. The main contributions of this dataset are:

- High-resolution ground truth depth: annotated ground truth of angular resolution 25” – an order of magnitude higher than existing lidar datasets with angular resolution of 300”.

- Fine-Grained Evaluation under defined conditions: 1,600 samples of four automotive scenarios recorded in defined weather (clear, rain, fog) and illumination (daytime, night) conditions.

- Multi-modal sensors: In addition to monocular camera images, we provide a calibrated sensor setup with a stereo camera and a lidar scanner.

How to use the Dataset

We provide documented tools for evaluation and visualization in Python in our github repository.

Click here!

Dataset Overview

We model four typical automotive outdoor scenarios from the KITTI dataset. Specifically, we setup the following four realistic scenarios: pedestrian zone, residential area, construction area and highway. In total, this benchmark consists of 10 randomly selected samples of each scenario (day/night) in clear, light rain, heavy rain and 17 visibility levels in fog (20-100 m in 5 m steps), resulting in 1,600 samples in total.

Sensor Setup

To acquire realistic automotive sensor data, which serves as input for the depth evaluation methods assessed in this work, we equipped a research vehicle with a RGB stereo camera (Aptina AR0230, 1920x1024, 12bit) and a lidar (Velodyne HDL64-S3, 905 nm). All sensors run in a robot operating system (ROS) environment and are time-synchronized by a pulse per second (PPS) signal provided by a proprietary inertial measurement unit (IMU).

Video

Reference

If you find our work on benchmarking depth algorithms useful in your research, please consider citing our paper:

@InProceedings{Bijelic_2020_CVPR,

author = {Bijelic, Mario and Gruber, Tobias and Mannan, Fahim and Kraus, Florian and Ritter, Werner and Dietmayer, Klaus and Heide, Felix},

title = {Seeing Through Fog Without Seeing Fog: Deep Multimodal Sensor Fusion in Unseen Adverse Weather},

booktitle = {The IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2020}

}

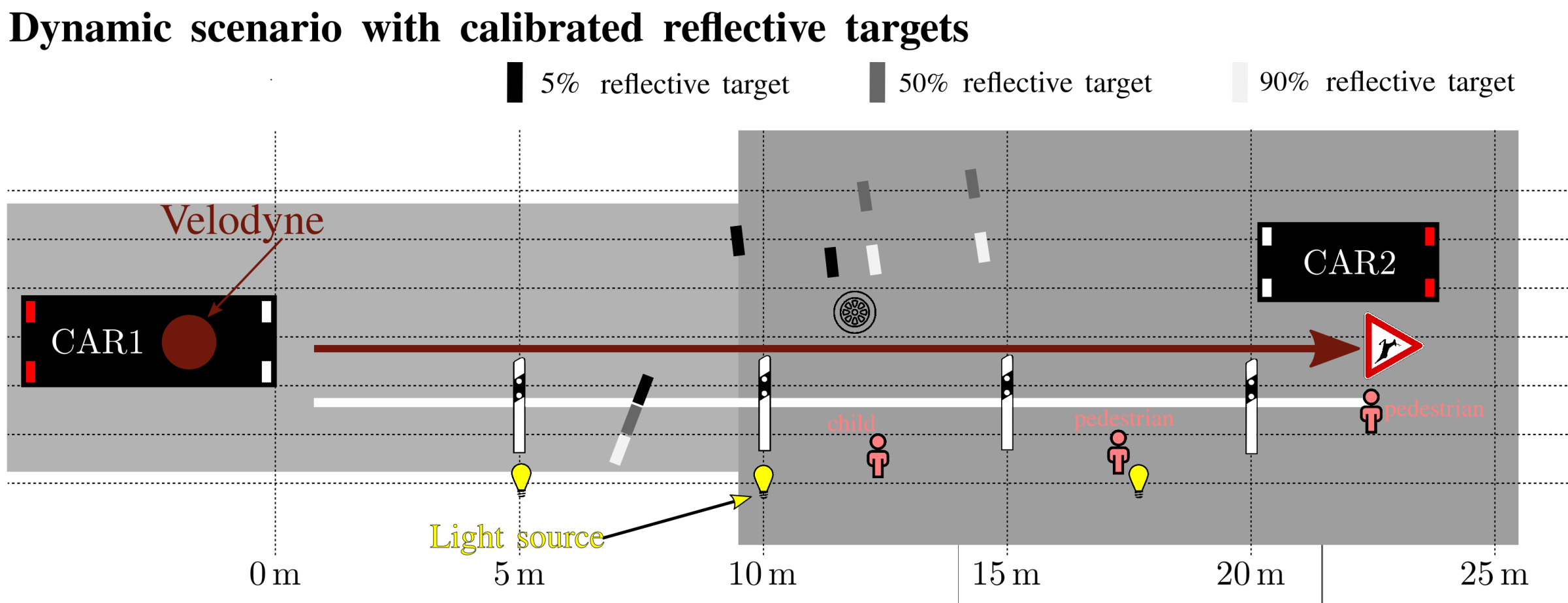

Due to the ill-posed problem of collecting data within adverse weather scenarios, especially within fog, most approaches in the field of image de-hazing are based on synthetic datasets and standard metrics that mostly originate from general tasks as image denoising or deblurring. To be able to evaluate the performance of such a system, it is necessary to have real data and an adequate metric. We introduce a novel calibrated benchmark dataset recorded in real, well defined weather conditions. Therefore, we have captured moving calibrated reflectance targets at continuously changing distances within a challenging illumination setting in controlled environment settings. This enables a direct calculation of contrast in between the respective reflectance targets and an easy interpretable enhancement result in terms of visible contrast.

Summarized our main contributions are:

- We provide a novel benchmark dataset recorded under defined and adjustable real weather conditions.

- We introduce a metric describing the performance of image enhancement methods in a more intuitive and interpretable way.

- We compare a variety of state-of-the-art image enhancement methods and metrics based on our novel benchmark dataset.

To capture the dataset we have placed our research vehicle within a fog chamber and moved 50cmx50cm large diffusive Zenith Polymer Targets along the red line below. For more information please refer to our paper. The used static scenario is currently part of the Pixel Accurate Depth benchmark.

Example Images

Above we show example images for clear conditions (left) and foggy images (right) for a fog visibility 30m-40m.

Reference

If you find our work on benchmarking enhancement algorithms useful in your research, please consider citing our paper:

@InProceedings{Bijelic_2019_CVPR_Workshops,

author = {Bijelic, Mario and Kysela, Paula and Gruber, Tobias and Ritter, Werner and Dietmayer, Klaus},

title = {Recovering the Unseen: Benchmarking the Generalization of Enhancement Methods to Real World Data in Heavy Fog},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2019}

}

Community Contributions

Point Cloud Denoising

For the challenging task of lidar point cloud de-noising, we rely on the Pixel Accurate Depth Benchmark and the Seeing Through Fog dataset recorded under adverse weather conditions like heavy rain or dense fog. In particular, we use the point clouds from a Velodyne VLP32c lidar sensor.

How to use the Dataset

We provide documented tools for visualization in python in our github repository.

Click here!

Reference

If you find our work on lidar point cloud de-noising in adverse weather useful for your research, please consider citing our paper:

@article{PointCloudDeNoising2019,

author = {Heinzler, Robin and Piewak, Florian and Schindler, Philipp and Stork, Wilhelm},

journal = {IEEE Robotics and Automation Letters},

title = {CNN-based Lidar Point Cloud De-Noising in Adverse Weather},

year = {2020},

keywords = {Semantic Scene Understanding;Visual Learning;Computer Vision for Transportation},

doi = {10.1109/LRA.2020.2972865},

ISSN = {2377-3774}

}

Acknowledgements

These works have received funding from the European Union under the H2020 ECSEL Programme as part of the DENSE project, contract number 692449.

Furthermore we would like to thank Cerema for hosting and supporting the measurements in controlled weather conditions. More information about the fog chamber can be found here.

Contact

Feedback? Questions? Any problems/errors? Do not hesitate to contact us!

- Werner Ritter, werner.r.ritter(at)daimler.com

- Tobias Gruber, tobias.gruber(at)daimler.com

- Mario Bijelic, mario.bijelic(at)daimler.com

- Felix Heide, felix.heide(at)algolux.com