ADUULM-Dataset

One of the key challenges of today’s semantic segmentation approaches is to obtain robust and reliable segmentation results not only in good weather conditions, but also in adverse weather conditions such as darkness, fog or heavy rain. For this purpose, multiple sensor data of several sensor types such as camera and lidar are required to compensate the weather sensitivity of individual sensors. The problem of current segmentation datasets such as Cityscapes, BDD or Apollo-Scapes is that these datasets do not provide a multiple sensor-setup, which is necessary for a robust semantic segmentation in adverse weather conditions. This motivates us to construct the ADUUlm-Dataset, a dataset, which consists of camera and lidar data and the corresponding temporal information. The main contributions of the ADUUlm-Dataset are:

- One of the first semantic segmentation datasets, which contains fine-annotated camera images and pixel-wise labeled lidar data and provides temporal information in short ros-sequences.

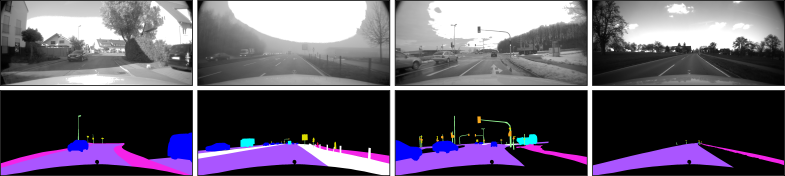

- The dataset consists of a large amount of data samples recoreded in diverse adverse weather conditions such as heavy rain, darkness, fog.

- Please check out our paper for more information. Click here (link to www.bmvc2020-conference.com/assets/papers/0474.pdf) for our paper at BMVC.

Dataset Overview

- Urban and rural scenarios in the surrounding of Ulm (Germany) and in diverse weather conditions (e.g. sunny, rainy, foggy, night, ...)

- Multiple Sensor-Setup: Cameras, Lidars, Stereo, GPS, IMU

- 12 classes (car, truck, bus, motorbike, pedestrian, bicyclist, traffic-sign, traffic-light, road, sidewalk, pole, unlabelled)

- 3482 finely annotated camera images

- Training data: 1414 (good weather conditions only)

- Validation data: 508 (good weather conditions only)

- Test data: 1559 (different adverse weather conditions)

- Appropriate for semantic segmentation, drivable area detection, ...

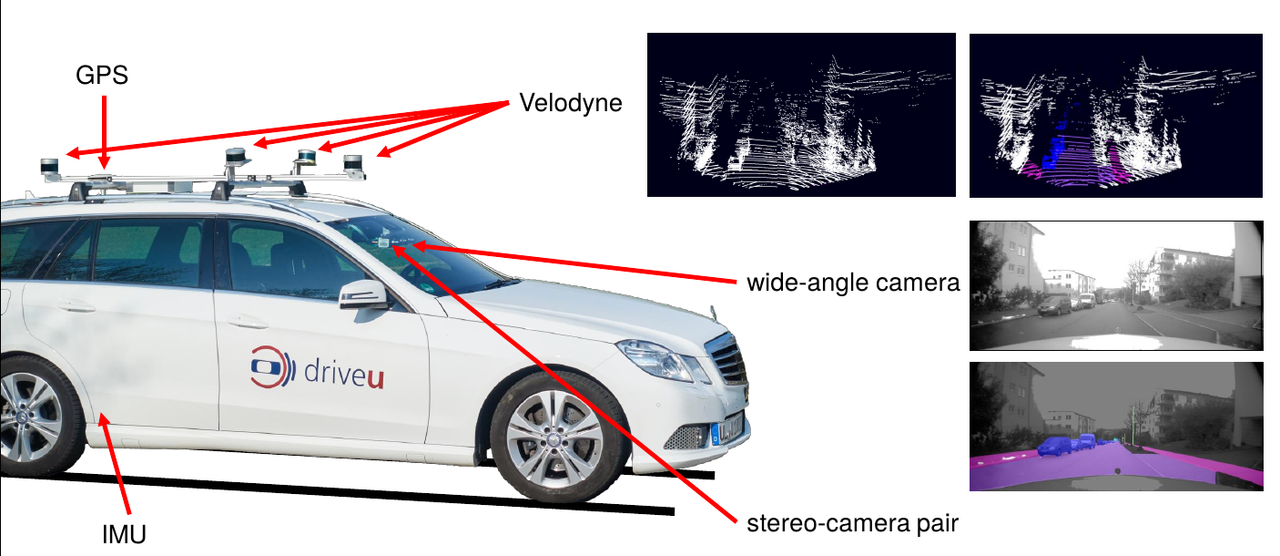

Data Acquisition and Sensor-Setup

This dataset was recorded from our test vehicle while driving in and around Ulm (Germany) at different daytimes, diverse seasons and in several good and adverse weather conditions such as fog, snow or (heavy) rain. Our test vehicle is equipped with the following sensors:

• wideangle camera of resolution 1920x1080

• stereo-camera pair of resolution 1920x1080

• rear camera of resolution 1392x1040

• four lidar sensors (Velodyne 16/32) mounted on the car‘s roof

• IMU

• GPS

How to use the ADUUlm-Dataset

We provide tools and scripts for using the dataset in Python in our Github Repository.

Download

Click here for registration and download

Citation

For the case you use the ADUUlm-Dataset for your scientific work please do not forget to cite our BMVC2020 paper:

@InProceedings{Pfeuffer_2020_TheADUULM-Dataset,

Title = {The ADUULM-Dataset - A Semantic Segmentation Dataset for Sensor Fusion},

Author = {Pfeuffer, Andreas and Sch{\"o}n, Markus and Ditzel, Carsten and Dietmayer, Klaus},

Booktitle= {31th British Machine Vision Conference 2020, {BMVC} 2020, Manchester, UK, September 7-10, 2020},

Year= {2020},

Publisher= {{BMVA} Press}

}

Publications based on ADUUlm-Dataset

2019:

2020: