The Assembly Assistant

Situation- and Useradaptive Functionality of Cognitive Technical Systems

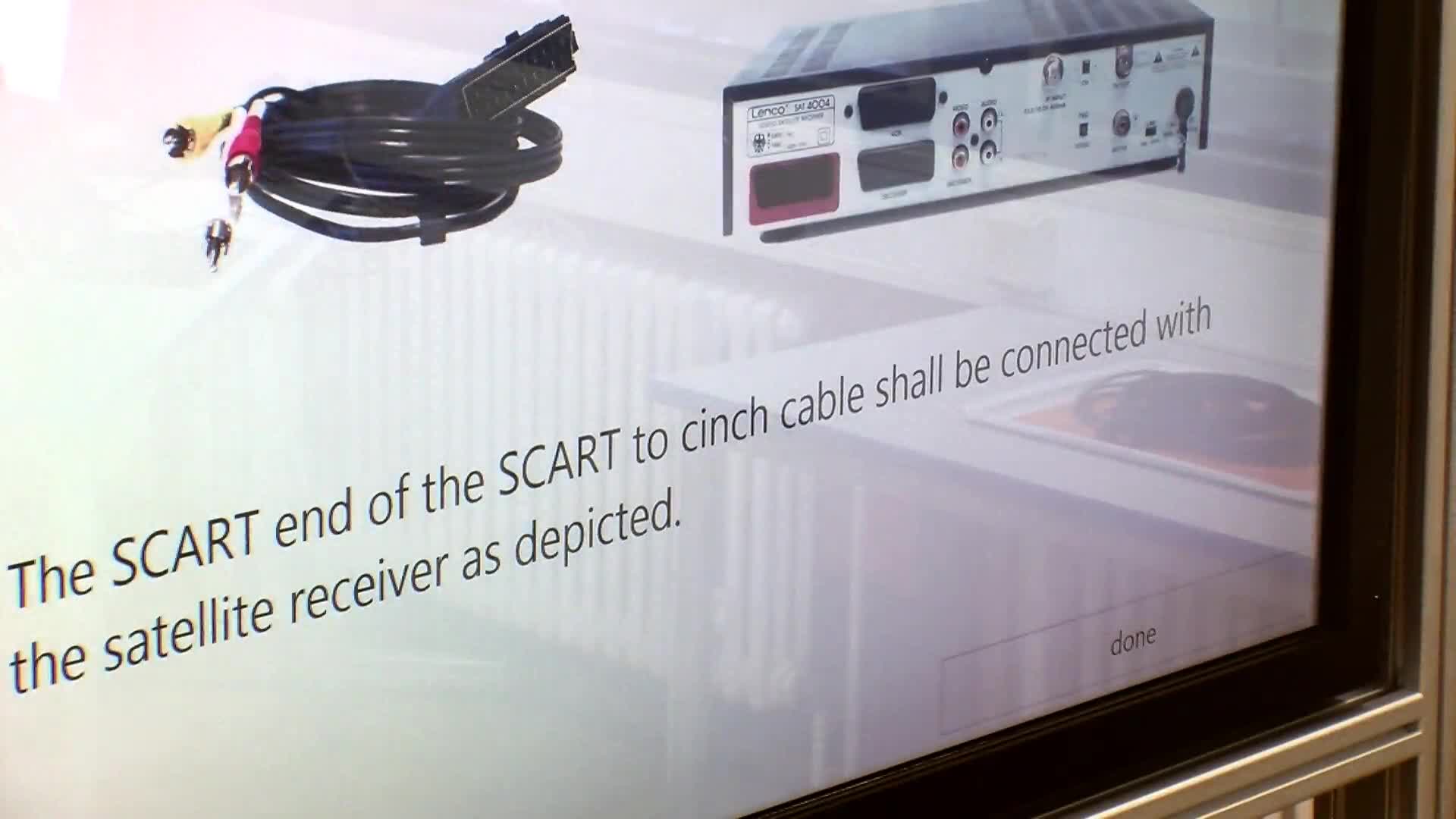

The film shows the interaction between the different components of a companion system. In the given scenario, an assistance system is demonstrated that helps the user assembling a complex home theater. The system adapts to the user and his or her environment. A set of instructions on how to connect the devices is generated using AI planning techniques. Based on adaptive dialog and interaction management control, the system passes the advice to the user in a multi-modal way. User knowledge and his or her preferences about the communication enable an individualized user/system interaction. Based on the formalized knowledge about the technical devices and cables that belong to the home theater, the system is capable of generating explanations about the presented instructions. Plan repair mechanisms enable the system to handle unexpected execution failures.

Video link: medium resolution / high resolution

The Ticket Purchase

Multimodal Information Processing

The scenario demonstrates multimodal, dynamic interactions between a human being and a technical system that are adaptive to the situation and emotional state. It uses the example of purchasing a train ticket. The example shows how a companion system is able to adapt its dialogue with the user according to the situational context and the emotions of the user. It therefore simultaneously analyzes and evaluates explicit and implicit input data. The user can select freely from several input modalities, that can even be switched during communication. These include classical ones like touchscreen devices or spoken language, but also innovative concepts like navigation based on gestures or facial expressions. The scenario demonstrates how further background knowledge about the user can be included; for example often visited destinations, his or her timetable or the number of travelers.

Video link: medium resolution / high resolution

The LAST-MINUTE Experiment

Emotion Induction and Emotion Recognition

The scenario shows emotion- and speech recognition in dialogs as well as multi-modal communication signals of the user by a companion system. The demonstration uses a dialog between user and companion system. The system presents verbal and visual tasks that are solved by the user via speech input. The so-called "Last Minute" experiment tested more than 130 test subjects. We analyzed how users interact with such "speaking systems" when having no input constraints. We further study what kind of problems can occur when an every-day situation including planning, replanning, and changing the strategy has to be accomplished. Companion interventions are initiated based on speech content and discourse history that are processed in real time. These interventions are comments that support the user and shall lower his or her stress level.

Video link: medium resolution